Xing Chen, PhD, James Herman, PhD, and J. Patrick Mayo, PhD, shared their expert insights in the Eye & Ear Foundation’s March 10th webinar, “Seeing with the Brain.” First up was Dr. Chen, an Assistant Professor in the Department of Ophthalmology who specializes in brain-computer interfaces, visual neuroscience, and blindness, and is the co-founder of a neurotechnology startup called Phosphoenix.

Developing Functional Vision

“The focus of my research is to develop a device that interfaces with the brain and might lay the foundation for blind people to regain some rudimentary form of functional vision in the future,” Dr. Chen said. She explained that this artificial vision would not look like normal vision, playing a video with a simulation of what artificial vision could look like – a bunch of dots that are likely of random colors in different sizes. These are called “phosphenes,” artificially produced dots of light. Seeing these would at least allow people to recognize objects around them.

When it comes to interfacing with the visual part of the brain, there are a few possible target regions, including the thalamus located deep in the brain, or the visual cortex at the back of the brain.

A visual prosthesis system works like this: A miniature video camera and eye tracker are positioned in the frame of glasses worn by the user. The video feed is processed by a mobile device which converts the images into instructions for interfacing with the brain. These instructions are sent wirelessly to the device, which is implanted in a visual area of the brain, such as the cortex or the thalamus. The brain implant delivers small electrical impulses to the tissue, generating visual percepts seen by the user.

Retinotopy

How is it that we are able to stimulate the brain with tiny electrical currents and have someone see anything at all? One of the most important concepts here is that of retinotopy: referring to the “retina” and “topy” (i.e. a map of input coming into the eyes via the retina). You can think of the visual field as being analogous to a computer screen composed of many pixels. Information coming from the visual field is processed by a corresponding “map” of neurons across the visual cortex, and the map is flipped – top to bottom, left to right.

There are different levels of processing that occur in the visual system. Light comes in through the eyes, hits the retina, goes through the optic nerves, and undergoes successive stages of processing in the brain. It first reaches the thalamus and then eventually is sent through the optic radiation to the visual cortex, which itself is divided into multiple areas.

The first stage of processing in the visual cortex is called the primary visual cortex. It has a total surface area – varying a lot from individual to individual – of approximately 50 square centimeters, or the size of a smartphone.

If Dr. Chen inserts an electrode into the primary visual cortex and records activity from the surrounding neurons (within a few millimeters from the electrode), she will notice that these neurons will only be active if there is a change in a very specific area of the visual field. This is known as the “receptive field” of a neuron, because neurons respond to information coming from that particular region of the visual field. Conversely, if she stimulates the neurons in this region by injecting current through the electrode, a person – whether sighted or blind – will see a dot of light located exactly in this region of the visual field.

Turning this concept into a visual prosthesis, if Dr. Chen places the electrode in a particular part of the brain and stimulates it, then the person would see a phosphene in the center of the visual field. If the electrode is moved to another location, the phosphene would appear in the periphery. By implanting multiple electrodes across the cortex, it is possible to obtain phosphenes in many different locations.

This research has been around since at least the 1960s; people have implanted arrays of electrodes to stimulate the brain in blind and sighted subjects. For example, studies in the U.S. and Spain — that each began in 2018 — have yielded valuable new insights. In the CORTIVIS study in Spain, collaborators of Dr. Chen have implanted four blind patients with an array of electrodes, producing some “extremely exciting” results. In the future, Dr. Chen expects several companies will start clinical studies, including Neuralink, and other startups including Revision, and her own startup, Phosphoenix.

Visual Cortex Stimulation

Dr. Chen has carried out experiments on monkeys and human subjects. In the CORTIVIS study, a Utah array with a 10×10 grid of intracortical electrodes was implanted in the visual cortex of blind human volunteers. Researchers use this device to record neuronal activity as well as to stimulate the brain. Dr Chen took this technology a step further in sighted monkeys, using a device with over 1,000 electrodes.

As the subjects viewed a bar sweeping across the screen, a wave of activity swept across the visual cortex. This indicates that each electrode was sampling a specific location in the visual field (the receptive field of the recorded neurons), and the receptive fields collectively covered a large region. This showed that in theory, electrodes could be stimulated to generate phosphenes at these receptive field locations.

To test this, the monkeys were trained to carry out a phosphene detection task in which stimulation was delivered on a single electrode at a time. First, they were trained on a visual version of the task before any stimulation was done. They just had to look at a computer screen and wait for a dot to appear, and report that they saw the dot by looking at it. This way the researchers knew whether the monkeys really saw the dot. After they became good at this version of the task, the dot was replaced by stimulation of the visual cortex to create a phosphene while the monitor itself remained blank.

Each time stimulation was delivered, the monkeys looked at the phosphene. Researchers analyzed the reported locations of phosphenes. They hypothesized that if neurons with receptive fields in the fovea were stimulated, the phosphenes would appear at the fovea. If receptive fields were in the periphery, the phosphenes would appear in the periphery. Indeed, the reported locations of phosphenes closely matched the neurons’ receptive fields, indicating that the concept of retinotopy worked when creating artificial vision.

The most difficult task carried out was a letter discrimination task in which monkeys were trained to recognize letters made up of little dots. The visual version of this task had them looking at a computer monitor where they were presented with a letter made of dots (for example, the letter “A”). They were then presented with two target letters (such as an “A” and an “L”) and had to make an eye movement to the matching target (the “A”). Once the subjects had mastered the visual version of the task, instead of showing the monkeys a letter composed of dots on the computer monitor, stimulation was delivered on multiple electrodes at the same time. Researchers picked electrodes they thought would produce phosphenes in the shape of a given letter, either the letter “A” or “L,” for example. Both monkeys were able to do this task, demonstrating that it is possible to recognize simple shapes composed of phosphenes.

The goals of an ongoing project (supported by an NIH Director’s New Innovator Award) are to determine the spatial and temporal resolutions of phosphenes, whether future patients can gain functional vision, and to gauge safety and efficacy of this technique.

LGN Stimulation

Dr. Chen received the NIH Director’s New Innovation Award and the Blackrock Ecosystem Operator award for another project in which she is targeting a different structure than the visual cortex. Here, they are implanting the thalamus – located deep in the brain – and targeting a small nucleus called the lateral geniculate nucleus (LGN).

“We think we’d be able to cover a lot more of the visual field if we target the LGN compared to the visual cortex, because the LGN is a very small, compact structure,” Dr. Chen said. Furthermore, the majority of the LGN represents the central region of the visual field.

Previous clinical studies have managed to target the visual cortex and obtain phosphenes in small patches, located up to ~30 degrees of visual angle. With the LGN, by contrast, it should be possible to cover the whole visual field. Previous research in monkeys has shown it is possible to stimulate the LGN, and monkeys report seeing phosphenes in the expected retinotopic locations.

In a collaboration with the Herman Lab, the goals of LGN stimulation are to determine the spatial and temporal resolution of phosphenes evoked via the LGN. Is there potential for a keyhole surgical approach, similar to deep brain stimulation (DBS) implants in >100K patients worldwide?

Lastly, with support from a Wiegand Entrepreneurial Research Award via the EEF, Dr. Chen is developing a novel device through her startup, Phosphoenix (founded in 2019), for deep-brain neuromodulation. Their goal is to improve the efficacy of visual prostheses by obtaining a higher image resolution and gaining extensive coverage of the visual field by using high channel counts with ultra-flexible, high-density electrodes. To improve patient safety, their device has a compact form factor, is implanted via a keyhole surgery, and uses low stimulation currents.

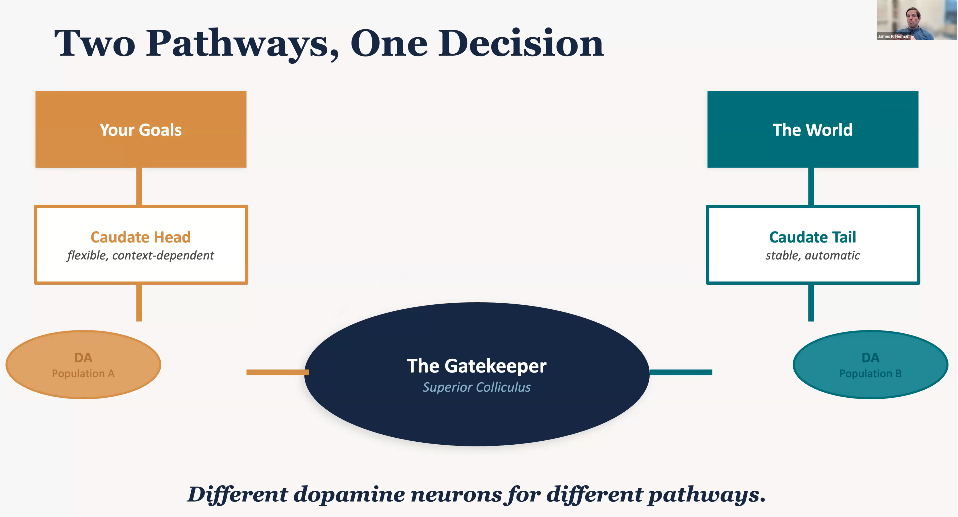

The Gatekeeper in Your Brain

James Herman, Assistant Professor of Ophthalmology with a secondary appointment in the Department of Bioengineering, discussed how deep brain circuits decide what you see. He asked viewers to imagine they were at a busy Saturday morning farmer’s market, with a lot on the agenda. You want to pick up some ripe tomatoes and get some sourdough for that soup you are making later. You scan through the crowd, see a friend waving, and see a spot of golden retriever that jumps on your lap and tries to get you to pet it. You smell some delicious pie cooking, which reminds you of the trip you took to GA last year with your family.

All of these things are competing all the time for your visual perception for the contents of your thoughts and actions. You handle all of them deftly and easily without even really noticing as these thoughts and visual influences come, some of them asserting themselves from the environment, and some from your own ideas and memory.

In other words, your brain is running two systems all the time. What you chose to notice: finding the ripest tomato, remembering sourdough for tonight, listening to your companion, and navigating through the crowd. What grabbed you: a flash of sunflowers you did not expect, a golden retriever who knows you, a familiar face in the crowd, and a scent that triggers a memory.

When the deep brain is damaged, these patients can see perfectly well as their eyes work. But half of the world has vanished from their awareness, like in Parkinson’s.

Indeed, what is less well known is that early stages of Parkinson’s can in some cases manifest as cognitive issues, which can range from visual neglect and manifest as an inability to focus on particularly internally generated ideas. For example, when you go to the farmer’s market, the plans you have for buying sourdough and tomatoes may not manifest as strongly.

This is somewhat surprising that something as visual and sensory as what we perceive is controlled by these deep brain structures known to affect movement. These patients have perfectly intact visual apparatus, all the beautiful visual cortical areas Dr. Chen talked about, visual areas that need to be targeted in order to bring new visual information to the brain. In Parkinson’s, parts of the brain are perfectly intact, but other parts – subcortical parts not necessarily associated with vision – are damaged.

In fact, years before the first tremor, the gatekeeper begins to fail. In Parkinson’s, cognitive symptoms manifest as heightened distractibility and difficulty sustaining focus. These precede motor symptoms by years and predict functional decline independently.

A Broader Pattern

In Parkinson’s, goals cannot hold against distractions. In ADHD, the gatekeeper lets too much through. In schizophrenia, everything feels relevant. The prominent theory is aberrant salience. Stimuli that might otherwise be considered irrelevant to a healthy patient takes on too much meaning and becomes extremely relevant regardless of importance.

All three of these diseases might be connected to dopamine. Current treatments that address these disorders, however, are blunt. They all broadly increase or decrease dopamine neuron activity, but this would be like trying to fix a complex wiring system in the home by turning the power up or down. “If you don’t have a precise wiring diagram that lets you specifically affect the pathways you are curious about, you will never fix the problem,” Dr. Herman said

Unfortunately, the current approach is to have one dial for the whole system, when what is needed is a wiring diagram with separate circuits and targets. We have also been looking in the wrong place. The field has focused on the cortex for decades, but the superior colliculus is when this is silenced and attention collapses, even though the cortex still works perfectly. This is extremely important for deciding where you are going to look and what information you will focus on.

A main focus of the Herman Lab right now “is trying to understand whether the superior colliculus might also be understood as a main integrating hub that regulates this sort of broader tapestry of visual experiences that I described with the farmer’s market whereby we have to navigate which visual percepts and which influences on visual perception are going to drive our experiences from moment to moment,” Dr. Herman said.

As a gatekeeper, the superior colliculus is the gatekeeper of what you see and what you do: your goals (plans, priorities, task rules) and the world (motion, color, sudden changes). Dopamine tips the balance.

Some neurons in the superior colliculus care about things like knowing that something will be rewarding or that something will appear in a particular location with a high probability. But other neurons in the superior colliculus do not seem to care about these factors, instead caring far more about stimulus driven factors like whether a visual stimulus is surprising or familiar.

From Blunt Instrument to Precision

There are multiple types of dopamine receptors. We can get even more specific within this goal directed circuit. For example, D1 receptors can amplify your goals and turn up the volume on what matters to you. D2 receptors can suppress distractions and turn down the noise from what does not matter. Different receptors, different functions, and different treatment targets.

A major goal of Dr. Herman’s research is trying to develop a wiring diagram that is precise enough so that a clinician would have a sense of which dial to turn rather than flooding the whole system and hoping for the best. The farmer’s market example – that is not willpower, but a circuit. “We’re learning how to fix it,” Dr. Herman said. “There are circuits that evolution has built over hundreds of millions of years. When they work appropriately, you don’t even really notice. When they fail, the consequences are devastating for patients and families. We’re working on trying to understand exactly how this system works so we can inform the next generation of treatments that will help patients in this area.”

Eye Movements

J. Patrick Mayo, Assistant Professor in the Departments of Ophthalmology and Bioengineering, explained that eye movements are a critical part of vision. As a simple example, he talked about the challenges our brains face when trying to find Waldo in the famous Where’s Waldo pictures. When looking at one of those pictures, all the visual information in the image enters your eye. “I hope that as you’re watching this, you can actually see the perimeter of your monitor, maybe see things behind your monitor, and all of those patterns of light are entering your eye,” he said. The problem with finding Waldo is therefore not a problem related to what is going on in the eyeball, but how the abundance of information entering the eye is interpreted by the brain.

Did you know there are more eye movements than heartbeats per day? We have ~3 eye movements per second. Vision is an active behavior; our eyes are constantly moving. It is something we do that we are not aware we need to do.

Take mice’s whiskers, which constantly move back and forth. Our eyes work similarly, as we try to determine things in the world, look around, find points of interest, and what we will do next. These mini decisions function in a “beautiful circuit” that can be used to understand the brain.

How do we extract information from patterns of light, and make sense out of them? In addition to “gatekeeper” circuits that Dr. Herman just discussed, one of the main ways the brain is by moving our eyes around the screen to focus on new points of interest. We move our eyes because when light enters the eye, it impacts the back of the eyeball where photoreceptors are. Through the course of evolution, we developed a density of many photoreceptors at one point on the back of the eye called the fovea. As we move our eyes, visual information that comes in no longer hits the fovea and instead hits a different part of the back of the eye.

Neurobiological Basis of Vision

Photoreceptors are over 10x denser where the fovea is (sometimes referred to as the macula). When information from the world hits the fovea, we see very well. When it hits the periphery, we cannot see as well and move our eyes so that the information we want to see can be placed on the fovea. As primates, this is something we do all the time – move our eyes to see points of interest better. Cones in the fovea help us see more precisely, while rods in the periphery lead to worse vision in the periphery.

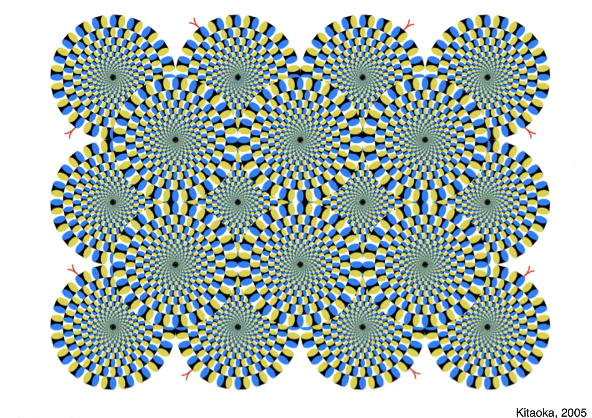

How do we know the eyes are constantly moving? Take this rotating snakes illusion. The illusion is that the snakes are moving, but there is actually no motion on the screen. The only thing moving are your eyes. As your eyes move, they trick the brain into thinking there is motion on the screen. You can play with this as you want. Pick a spot on the screen, try to focus on it intently, and you can slow down the motion. As you move your eyes around, you will see that motion speeds up and continues.

Our eyes are our moving visual sensors. Another moving sensor we have are our smartphones. Think about what it would be like if you take video on your smartphone and move it around like you would move your eyes. It would be very jumpy, not at all like what we experience when our eyes are moving. Why do we not get nauseous from our eye movements like we would if we watched such a video? The brain moves the eyes and creates stable, continuous vision. “I am intentionally using the word creates because the information that comes in through the eye needs to be interpreted by the brain,” Dr. Mayo said.

“A subtopic in my lab,” Dr. Mayo continued, “is the observation that not only does the world move when we move our eyes, but it is also effectively ‘blacked out’ when we close our eyelids to blink. I guarantee that everyone has blinked during this presentation, and no one has experienced seeing blackness when they blinked. So not only does the brain have to keep our perception of the world stable when the eyes move, but it also has to keep what we see as continuous even though we blink.”

Vision includes the eyes and the brain. When information comes in through the eye, the brain processes it and deliberates and then makes commands to move the body such as the eyes. When we move, that changes what we perceive again, repeating the interactive loop between what we see and how we respond to the world. “The cool and exciting thing about neuroscience and vision science for me is that this loop helps us determine how the brain works,” Dr. Mayo said.

Dr. Mayo’s lab uses technologies such as infrared video recordings to make high-definition recordings of eye movements. The lab seeks to understand brain functions to determine how the brain stabilizes vision, how eye movements are coordinated with each other, and how eye tracking can improve clinical outcomes (such as nystagmus) and surgical training. For example, tracking eye movements in expert surgeons and teams of surgeons to see how they coordinate might educate new surgeons and speed up the process of training surgeons.

Dr. Mayo’s research is vital for many reasons. The brain circuitry for eye movements and visual processing overlaps and therefore understanding one helps us understand the other. More generally, understanding brain mechanisms for vision and action help us understand schizophrenia, Parkinson’s, dementia, brain plasticity, and neurological disorders. In the clinic, eye movement research improves diagnostics and treatment efficacy, and in the operating room, it makes for more efficient surgical training as well as safer and better surgical outcomes.